How Many Fans Does It Take to Unlock an Album Stream?

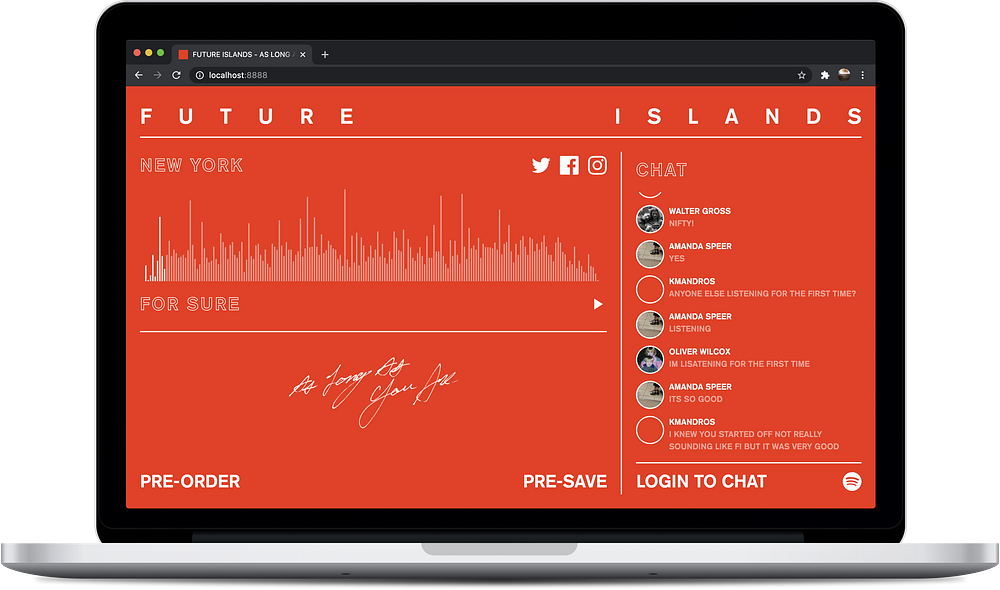

Building a Global Album Premiere for Future Islands

Nine years ago, I launched a web app for Blink-182 in support of their then newly released record Neighborhoods which allowed you to listen to the album and chat with fans in your neighborhood. I was reminded of this project when Future Islands approached me about developing a similar sort of concept for their new album, As Long As You Are. We would again be placing fans in location specific listening parties but with a catch: each location stream must be unlocked by meeting a certain amount of visits. (Nothing makes me feel more nostalgic than a classic “unlock” campaign.) Instead of neighborhoods, we decided to place fans into regions (states, provinces, and prefectures.) This begs the question, how many visits should it take to unlock a particular region and is there a way to derive this threshold dynamically?

Visit the web app today to be placed into your own region’s listening party and read on to learn how we pulled it off.

Determining a Location’s Threshold

An easy way to handle thresholds is to just pick a number and use the same number for each region. The issue here is that the number you pick may be impossible to achieve in one region and too easy to achieve in another. It would be better if this threshold took into consideration the population of said region. Luckily, we can get that data in most cases.

It starts by getting the coordinates of the user. You can do this passively by attempting to turn their IP address into coordinates or more directly, by asking the user to allow access to their device location. We’re using both methods in our app. When the user initially visits the page, we use MaxMind’s GeoIP service to return geographic information about the IP, including coordinates. These coordinates are then passed to Mapbox’s Geocoding service which returns all sorts of data types about the geographic location, including its region. In this region data, we’ll get many properties like the region name and coordinates. We also receive the Wikidata identifier. Using this id, we can query the Wikidata API to get the most recent population count if it is available. All of this happens in a second or two.

So now we know the user’s region and that region’s population but how do we determine the appropriate threshold. I thought of a rather simple solution. Future Islands has 1.6 million monthly listeners on Spotify. Earth has 7.6 billion people. If we divide the two, we can surmise that 0.0002 people on earth listen to Future Islands. 😅 We can then multiply this value (or a fraction of this value) against the location’s population to come up with a fair dynamic threshold. There’s no point in taking this stuff too seriously as the band more likely has different levels of fans in different locations but this at least allows us to set it and forget it (fairly) for the entire earth.

Serverless Design

Keeping track of all this data and being able to react to it as it happens requires a smart database setup. I’ve been a Serverless convert for a while now so I’ve used DynamoDB in this app. We’ve setup tables for locations, visits, and chat messages. Locations (and their thresholds) are created dynamically as users visit the app. Each visitor is stored as an IP address and location id pairing to make sure visits are unique. When a new visit is registered, we use a DynamoDB Stream to increment that location’s visitor count accordingly. All of these visits, messages, and any unlock confirmations are sent back to awaiting clients in real-time using Pusher. I love seeing all this data get channeled to the appropriate location.

I actually did some research on AWS’s own WebSockets API and it looks promising. However, I’ve never failed with Pusher so we happily paid the price for their solution.

Spotify Powered Chat

We knew we wanted a chat associated with the app so fans could socialize while waiting for their stream to be unlocked (and while experiencing the music for the first time.) While the stream would not actually be powered by a streaming service (more on that later,) I thought it made sense to use Spotify as the authentication required to enter the chat. Future Islands has a strong listener base on Spotify but I’ve always found that Spotify’s own social elements were lacking. This app gave us an opportunity to test how a Spotify user might integrate into a more social listening environment.

This can be done very simply by using Spotify’s client side Implicit Grant Flow authentication which I have written about before. Once you have an authorized Spotify user, you can send messages to an awaiting DynamoDB endpoint and then use Pusher, once again, to send those messages to all awaiting clients. I can understand why Spotify doesn’t want to bog down their own software with too many social features. It’s up to us developers to experiment with how these features might perform. Luckily, Spotify grants us access to a platform which allows exactly this.

Faux Livestream

Since our experience was launched before the album was distributed to streaming services, we needed to power the audio stream ourselves. This can be done very simply by hosting an MP3 of the record on a CDN somewhere. However, once you add a chat to the experience, you have to assume users are going to think that they’re all listening to the same track, at the same point. If you only have a single room, you may be able to do this by live streaming the record using YouTube. However, our application has an unknown amount of rooms with varied unlocking times for each and we’d like each stream to start as soon as the location becomes unlocked. 😓

To pull this off, I greatly simplified the idea of what a livestream is. If we know the point in time in which the location was unlocked and we know the duration of the entire album, we should be able to calculate the current playing position of the album at any point in time. So, for example, if you arrived to an unlocked location 10 minutes after it was unlocked, you should be listening to the record 10 minutes in. Here’s a little code:

// Get current time and when location was unlocked

let now = Date.now()

let unlocked = unlockedAt// Calculate difference in ms

let ms = unlocked - now// Convert ms to absolute seconds

let seconds = Math.abs(ms / 1000)// Calculate total playbacks

let playbacks = seconds / audio.duration// Get current position

let current = (playbacks - Math.floor(playbacks)) * audio.duration

Using this dynamic position, we can then simply seek the album to this position when the user begins streaming.

audio.currentTime = current

audio.play()This technique is very similar to how I handled R.E.M.’s Monster A/B player and could easily be applied when streaming directly from a DSP.

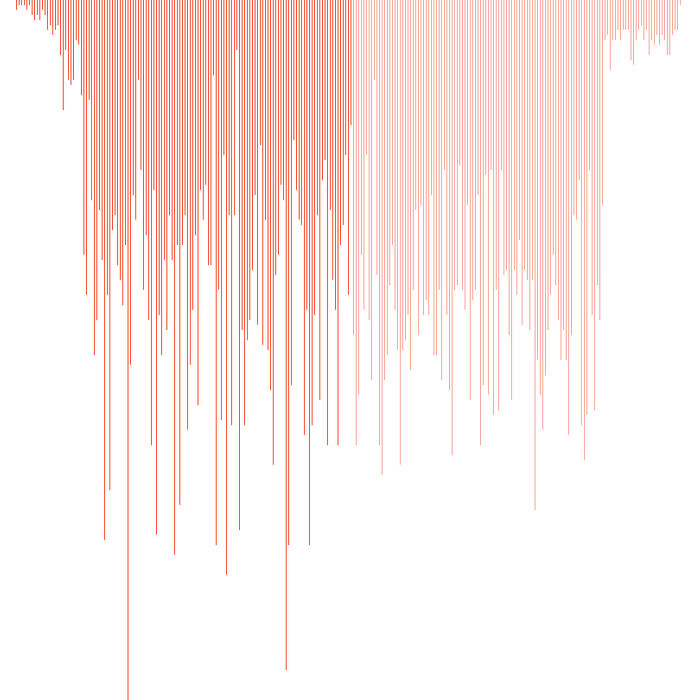

Waveform

I proposed that we include a simple audio visual to keep the interface interesting as users streamed the record. Initially, I was thinking this would be a Web Audio powered real-time audio spectrum but I quickly learned that was not very compatible with my faux streaming solution. The issue was that Web Audio requires an entire track to be loaded before you can get the audio data required to create a visualization. Since my streaming solution involved one large track of the entire record, this would have required a long loading period for the user and I was obsessed with making this experience as performant as possible.

This meant that we required a visual solution which was pre-rendered. Luckily, my time at SoundCloud revealed the answer: the waveform. SoundCloud pioneered employing the waveform image as a way to visualize audio on their website and embeddable players. When I was working there, I spent A LOT of time experimenting with the visual. Hell, Johannes Wagener and I even wrote a library called Waveform.js that made drawing them easy. (Which isn’t supported anymore by the way.) I think one of the best solutions to drawing a waveform is starting with an array of loudness levels. This array can then be used to dynamically draw the waveform responsively using canvas or svg. This is the technique we employed on Waveform.js and SoundCloud uses themselves.

In order to get this array of levels, I used the incredible audio feature extraction library Meyda to do so. Meyda allows you to extract all sorts of audio features offline without the need to playback the track. This include the rms values, which gives us a rough idea of the loudness of audio.

meyda glada.wav rms --o=rms.json --format=jsonI then wrote a companion script when greatly simplified this data into 1800 normalized levels in the range of 0.0-1.0 where 0.0 is not loud and 1.0 is very loud. This was then exported for each track and stored in our project locally using @nuxtjs/content. Finally, I used this levels data array to generate a responsive visual using canvas. Check out this CodePen. This visual is constantly being rendered to handle any window resizing. This constant rendering also allows us to visualize the progress of a track which we can do by simply changing the color of bars which have been played.

Thanks

Naomi Scott at Beggars Group and Will Tompsett at 4AD reached out about this project on Sept 11 and we launched it on Oct 8th. Never once did we jump on a zoom or phone call. We just efficiently built the thing. I really have to thank them for not only the initial concept but also the incredibly inspiring way they work. If you get a chance to work with this duo, take it. I must also thank Ben Dickey and Constant for trusting our approach to the app. I now get another punch on my Constant Artists freelance card. (Working my way through the roster.)

Thanks to the Future Islands fans for coming from all over to participate in this momentous occasion. Who knew Future Islands had such a huge draw in Pyongyang? Finally, thanks to the band for green-lighting the concept and recording such an incredible record.